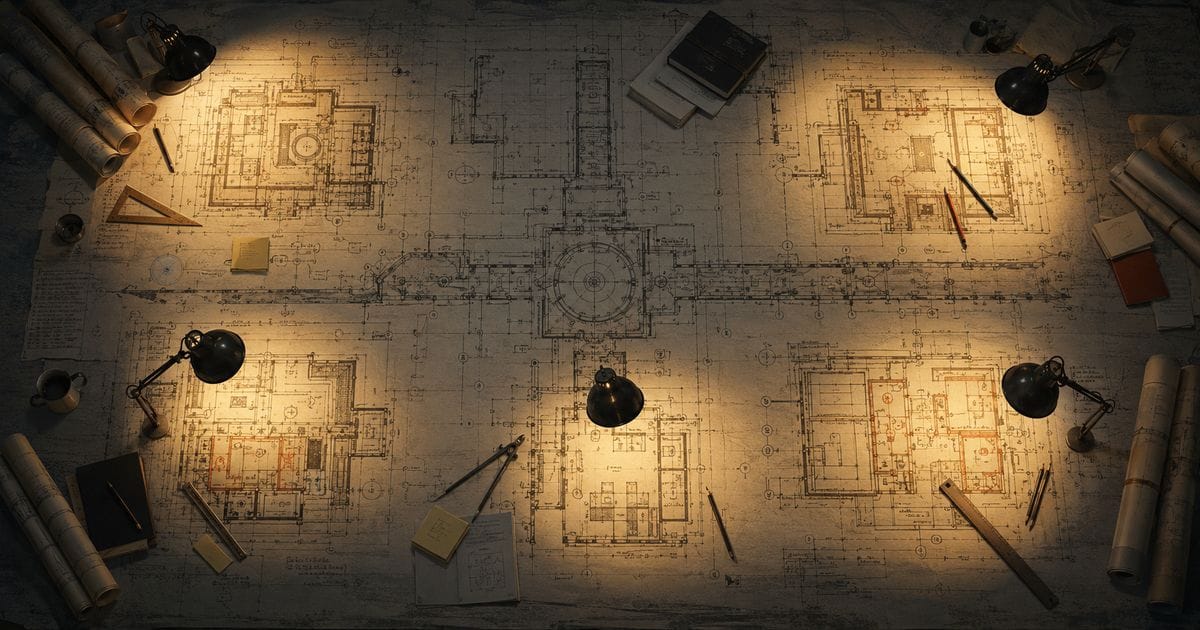

The Rearchitecture Your Team Could Never Justify

Every codebase has the rearchitecture that never gets prioritised. We split ours into six parallel workstreams and shipped it in eight days, start to finish. The hard part wasn't speed. It was coordination.

Every codebase has one. That architectural problem everyone knows about, everyone agrees needs fixing, and nobody can justify spending a quarter on.

For swamp, it was the extension catalog. Extension state was scattered across the filesystem, a SQLite catalog, a lockfile, and runtime registries. Nothing owned the "this extension is in state X" invariant. Five different code paths could write to the catalog. Five separate loaders — one per extension kind — each with duplicated logic that had drifted. And underneath all of it, V8's module-import cache was silently serving stale modules after a rebuild. The fix for that one had been "restart the process."

The bug reports looked like noise. "My extension didn't reload", "I see two versions of the same extension", "Swamp says my extension is broken when it isn't." It felt like a sleuth of bugs — apparently unrelated issues that turned out to be the same animal. Any of them fixable on their own. All of them together produced a combinatorial surface where fixing one layer reintroduced a symptom at another. We couldn't swashbuckle our way through it. We needed a better approach.

We fixed it in eight days, start to finish. Planning, implementation, testing, deployments (weekend included). Not in a branch, not in a freeze. Six workstreams, each with its own agent in a separate worktree, landing to main piece by piece while the team continued shipping other work. Every merge went straight to users and it caused no major issues.

Six parallel agents in separate worktrees will produce six locally-correct, mutually-incompatible solutions if nothing holds the cross-workstream contract. Speed wasn't the hard part, the coordination was.

Six workstreams, eight days

The only hard sequencing constraint was the first workstream: establishing the aggregate root and repository, which had to land before the rest could start. After that, they overlapped.

The first two days were the foundation. A proper aggregate root for extensions with seven distinct states, a single repository as the gateway, lifecycle services for install, remove, and upgrade with atomic ordering guarantees. Day two alone was eleven commits, including a cross-process concurrency stress test and a crash-state recovery harness.

Days three and four replaced the legacy "rebuild everything from scratch" reconciliation with a minimal-diff approach and started closing tactical bugs that surfaced once the aggregate existed. Identity collisions on pull, incorrect bypass logic, manifest version inconsistencies, things that had been individually mysterious for months and suddenly had obvious fixes once the architecture was right.

Days five through eight collapsed the five copy-paste loaders into one unified loader, defeated the V8 module cache with fingerprinted import URLs, and shipped a new doctor command that renders aggregate state and offers repair. Two of the largest workstreams shipped on the same day. Neither was rushed and both went through the full plan review cycle before any code got written.

Eight calendar days: fourteen bug-class trackers closed, seven UAT issues absorbed.

The architect in the room

What held the six workstreams together was an architect agent that ran for the entire sprint, keeping full context across all of them. When the loader agent hit a semantic question about distinguishing partial loads from complete ones, it asked the architect, not me. The architect had the aggregate semantics from workstream one in context and answered in one round trip. That pattern repeated dozens of times across the eight days.

Some contracts crystallised mid-sprint. Ordering rules between filesystem, lockfile, and catalog writes. The uniqueness invariant across aggregates. How freshness should work as an aggregate query rather than a sentinel. The architect kept the live version and propagated it to whichever implementation agent needed it next.

The coordination model was a triad. I set direction and corrected framing when the architect drifted. The architect held context, fielded questions from implementation agents, turned my direction into structured briefs, and filed trackers when architectural debt surfaced. The implementation agents executed and reported findings back.

Each role doing something the others couldn't. The architect couldn't ship six workstreams alone. Six parallel agents couldn't stay coherent without a context layer above them. And I couldn't hold the detail of six concurrent streams in my head, but I could tell when the overall direction was wrong.

The risk in this approach is real. The architect agent is a single point of failure. If it loses context or picks up the wrong invariant, every implementation agent downstream inherits the problem. It works because I catch the architect when it drifts. That means staying attentive enough to notice as it's not self-healing.

Plans before code

Every workstream went through the same cycle. Agent proposes a plan, a separate review pass reads it adversarially — what's missed, what breaks under concurrent writes, what assumes the wrong invariant. The plan gets revised and only then does implementation start.

The loader workstream's first plan had a type check that would have wrongly classified partial loads as complete. This was caught before any code existed. The doctor workstream's first plan had a bundle-orphan detector that would have misclassified live bundles on macOS because of symlink aliasing between /private/var and /var. Again, caught before any code existed. Same workstream's repair plan would have left bundle files as orphans for the next run, breaking idempotence. Again, caught... you get the point.

These would have shipped, a code review would likely have missed them because they require holding cross-cutting context across a multi-file diff. A review pass takes minutes but the bugs it catches take hours or days to find after shipping.

The testing story

After all six workstreams shipped, we turned to the UAT suite. Original plan: extend the existing twenty-eight test files to cover the new architecture.

That plan lasted about five minutes. The existing tests were written against the old architecture. Extending them meant grafting new assertions onto old assumptions. The right call was to delete all twenty-eight files and replace them from scratch. Full coverage matrix, end-to-end scenarios only, driving the CLI directly.

The architect agent produced a 396-entry test matrix. Fifteen thousand words in a design document, spanning twelve sections - it was a full replacement.

Event writing the tests found things the rearchitecture hadn't actually fixed.

What the tests found

While building out the test matrix for extension state, the implementation agent noticed something odd. The matrix asserted that the doctor command's JSON output would surface all seven extension states. Empirically, only one showed up. Failures appeared in a completely different part of the output, with different field names.

An hour of code archaeology turned up the answer. Two parallel write paths still existed. The new reconciliation path went through the aggregate properly. A legacy fallback path bypassed it entirely. Which one fired depended on prior process state.

Two related findings came out of the same dig. One aggregate method had zero production callers, production code was writing through a direct catalog call that bypassed the invariant. And one of the seven states was never visible in the output because the transaction that recorded it also deleted the row.

None of this was user-visible, but the architectural debt was real, an invariant bypassed on one path, observability that depended on process state. Filed as a tracker with a three-piece resolution plan, one day after the last workstream merged.

The tests didn't just verify the rearchitecture. They showed us where it was still broken.

This is what agents excel at

Every team has a version of this in their backlog. The refactor that would make the next six months easier but can't compete with this quarter's features. That work rots and it's the accumulation of deferred decisions that kills codebases, not bad code.

The rearchitecture ended up better than what a manual effort would have produced. Not because agents write better code but because the coordination model let us be more rigorous than we'd have been under the time pressure of a quarter-long project.

What if it hadn't worked? We'd have lost eight days, not six months. That's what actually changes the decision to take on architectural work. The risk drops low enough that you just try. If it doesn't land, you move on and come back when you're ready.

This is why leaning into agents changes the whole calculus of your SDLC. The work that was always too expensive to attempt becomes the work you do on a Tuesday.